Further Sunlight on Government Data

Monday, July 20th, 2009

In a previous post, we discussed some of the interesting things the US government is doing to make its data more widely available, culminating in the Data.gov website. This website is now up and running and has definitely made some progress since we've last discussed it.

In a previous post, we discussed some of the interesting things the US government is doing to make its data more widely available, culminating in the Data.gov website. This website is now up and running and has definitely made some progress since we've last discussed it.

Data.gov is broken down into three main catalogs:

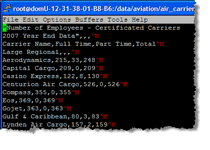

- Raw Data Catalog (with data files available in XML, CSV, KML, etc.)

- Tools Catalog (list of tools built to work with various open data sets)

- Geodata Catalog (links to Federal geospatial data)

They've also tried to make it easier to search for data sets, which like video, is quite reliant on being tagged with good, meaningful descriptions and related meta data. It's a hard nut to crack. For example, government agencies tend to release data sets on an annual basis, so you'll have, say, 5 different data sets (and counting) for the “Public Libraries Survey” from 2004 through 2008. If your search terms aren't specific enough, these repetitious items tend to clutter up the search results. As Data.gov continues to add more data sets, hopefully they can refine this area further.

But, then again, maybe they won't have to. The folks at Sunlight Labs, whose mission is to build technology that makes government more transparent and accountable, has recently announced a project called The National Data Catalog. It will be a tool that aims to take the Data.gov concept and improve upon it. From the announcement:

“We think we can add value on top of things like Data.gov and the municipal data catalogs by autonomously bringing them into one system, manually curating and adding other data sources and providing features that, well, Government just can't do. There'll be community participation so that people can submit their own data sources, and we'll also catalog non-commercial data that is derivative of government data like OpenSecrets. We'll make it so that people can create their own documentation for much of the undocumented data that government puts out and link to external projects that work with the data being provided.”

This should be interesting to watch. As the Sunlight folks say in a later post, they are not out to replicate Data.gov, but to stand on its shoulders (similar to how, say, Weather.com relies on and improves upon the National Weather Service). Given the nature of the beast, data sets need to be described really well in order to be both searchable and useful. Hopefully the community aspect, in particular, can help give this data more utility. If any are tech savvy folks interested in either following the project or contributing with code, here's the project page.

It was intriguing to see how all this newfangled web 2.0 technology was applied during the US presidential campaign this past year (organization, multimedia, etc.). It's also quite interesting to hear about

It was intriguing to see how all this newfangled web 2.0 technology was applied during the US presidential campaign this past year (organization, multimedia, etc.). It's also quite interesting to hear about

These are some very good times for those of you out there who like

These are some very good times for those of you out there who like

I'm slightly (fashionably?) late to this party, but I just came across a new website called

I'm slightly (fashionably?) late to this party, but I just came across a new website called  There's a surprising amount of

There's a surprising amount of